05 — Agentic AI & Natural Language

05

The Overnight PhD

for General Contractors

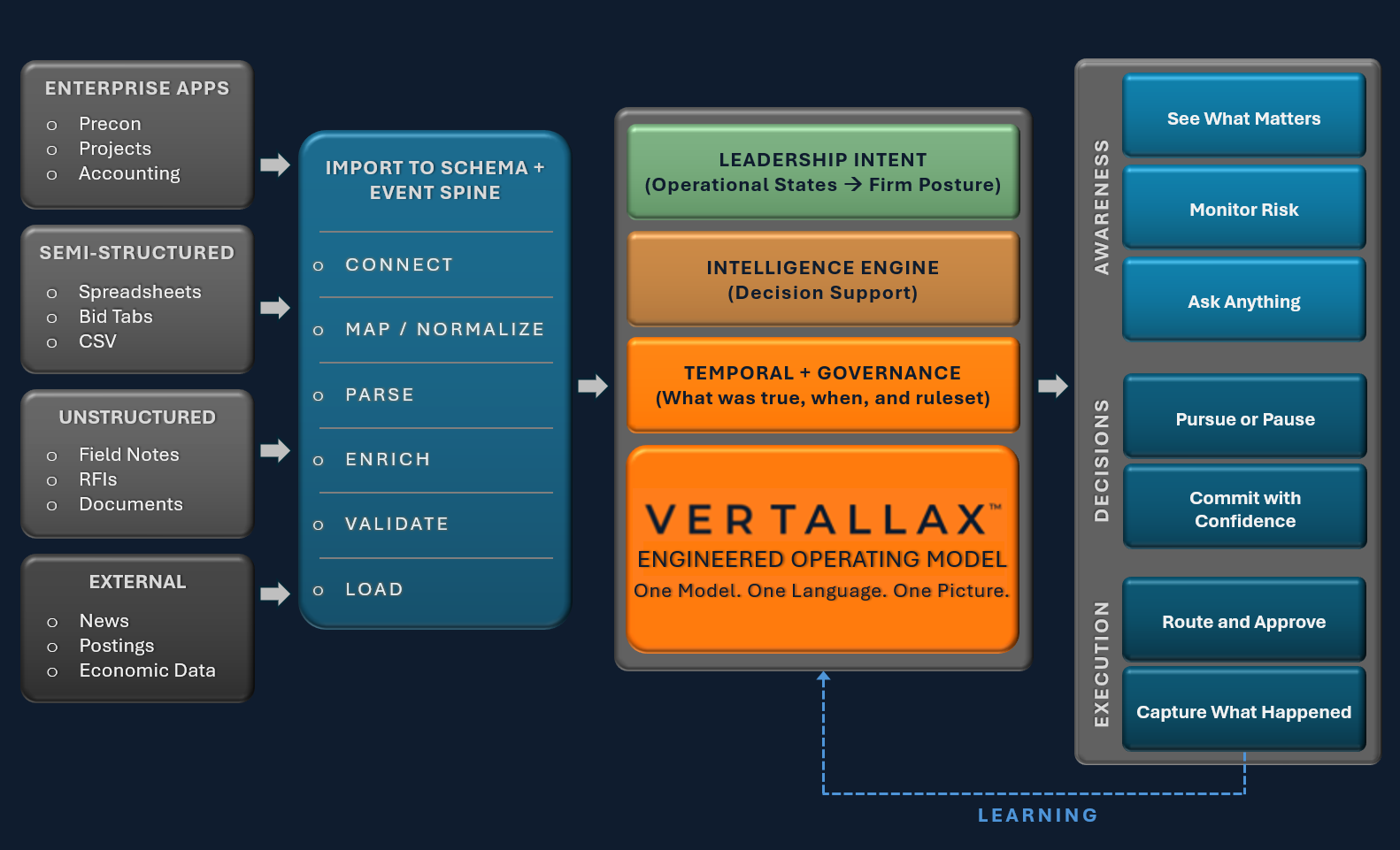

The AI layer doesn't just answer questions — it reasons across tools, constructs multi-step queries, and creates structured operational data from natural language. Every response is grounded in your firm's canonical records, not approximations.

Just Ask Interface

Natural language access to the entire firm's operational data via the Just Ask interface at /admin/ask/. Ask about pipeline status, resource availability, project health, pricing benchmarks — get precise, sourced answers from live firm data.

Agentic Tool Loop

The AI doesn't search once — it reasons across a loop of Phase A read-only tools: opportunity lookup, stage query, project status, resource capacity, price intelligence, pipeline summary, and firm context. Multi-step inference, not single-pass retrieval.

NL Opportunity Creation

Describe a new pursuit in plain English and the system populates canonical fields via the get_opportunity_defaults() cascade — stage, owner, lead type, budget range, market sector — sourced from firm history and the canonical operating model.

Structured Output Engine

Every AI response is grounded in structured records — not hallucinated summaries. Answers reference canonical IDs, field values, timestamps. The AskLog model captures every query, tool call, and response for audit and analytics. Decisions you can trace back to a record.

Decision Support

The Intelligence Engine surfaces Go/No-Go signals, at-risk pursuits, expiring opportunities, resource overload warnings, and margin anomalies — presented as actionable intelligence in the Just Ask interface and the Daily Plumb feed.

Compounding Data Moat

Every decision, correction, and enrichment captured becomes training signal for the next query. The longer a firm uses Vertallax, the sharper its intelligence — because the system's context is your firm's unique operational history, not generic benchmarks.